AI Can Generate SQL — But It Still Doesn’t Understand Your Data (or Your Business)

AI Can Generate SQL — But It Still Doesn’t Understand Your Data (or Your Business)

Everyone talks about text-to-SQL like it’s solved.

Just pass a prompt → get SQL → done.

But when you actually build it…

👉 Everything breaks.

The Illusion

At first, it feels simple:

“Give schema + user query → generate SQL”

And yes, for simple queries:

SELECT COUNT(*) FROM users;

It works.

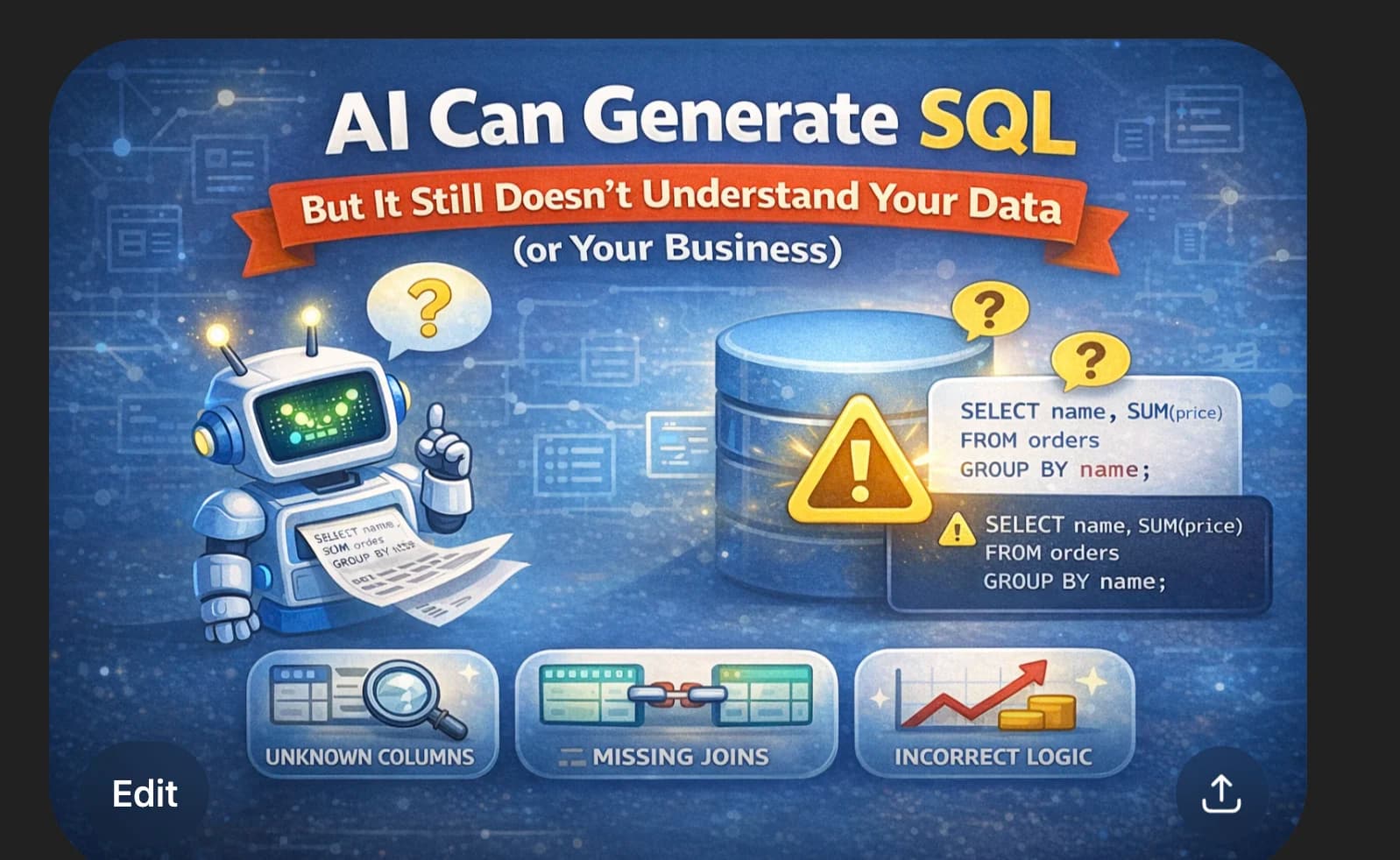

Reality: Systems Are Not Simple

Real users ask:

“Show top customers last month by revenue excluding refunds”

Now the model must:

- understand joins

- filter refunds

- calculate revenue correctly

And this is where things fail.

🧠 How Real System Actually Works

Instead of raw prompting, I built a pipeline.

User Query

↓

Context Builder (Schema + Docs)

↓

LLM (SQL Generation)

↓

Validation Layer

↓

Execution

↓

Response + Feedback

1. Schema Injection (Foundation)

Before calling the LLM, I pass structured schema:

- tables

- columns

- relationships

Example:

Table: orders

Columns: id, user_id, price, status

Table: users

Columns: id, name

👉 This helps the model generate valid SQL

But:

👉 Large schema = confusion

👉 Small schema = missing context

2. RAG (Retrieval-Augmented Context)

Schema alone is not enough.

So I added context retrieval (RAG):

- business definitions

- query examples

- known patterns

Example:

"Revenue = SUM(price) where status = 'paid'"

👉 This guides the model

But still:

👉 AI does not truly understand this 👉 It just uses it probabilistically

⚠️ The Hidden Problem: More Context ≠ Better Results

At first, it feels logical:

“If we give more context, the AI will perform better.”

So we try:

- full database schema

- detailed documentation

- multiple examples

But something unexpected happens.

👉 The model starts hallucinating more.

Why This Happens

LLMs don’t understand context like humans.

They:

- process tokens probabilistically

- try to match patterns

- get confused with too much input

When context grows:

- important signals get diluted

- irrelevant tables affect output

- model mixes unrelated concepts

Real Example

You provide:

- 20 tables

- 200+ columns

User asks:

“Show top customers”

AI might:

- pick wrong table

- join unrelated tables

- use incorrect columns

👉 Not because it's bad 👉 But because too much context reduces clarity

3. The AI Agent (Generation Layer)

The LLM acts like an agent.

Input:

- user query

- schema

- retrieved context

Output:

- SQL query

But problems still happen:

- hallucinated joins

- wrong aggregations

- incorrect filters

4. Validation Layer (MOST IMPORTANT)

Before executing SQL, I validate:

- table existence

- column existence

- allowed operations

- query limits

👉 Without this → system breaks

5. Feedback Loop (Continuous Improvement)

After execution:

- log bad queries

- track failures

- refine prompts

- improve retrieved context

👉 System improves over time

❗ The Real Problem: Business Understanding

Even after all this:

AI still fails.

Because it doesn’t understand business meaning.

Example:

“Revenue”

In real systems:

- exclude refunds

- exclude failed payments

- include or exclude taxes

👉 AI doesn’t know this unless defined

And even then:

👉 It may still apply it incorrectly

⚠️ Why AI Still Fails

Even with:

- schema injection

- RAG

- validation

AI still:

- guesses intent

- lacks reasoning

- produces logically wrong SQL

🧠 Key Insight

AI is not replacing backend logic.

👉 It requires backend engineering around it

🚧 Where I Am Right Now

I’m still improving this system every day.

- refining prompts

- improving schema selection

- improving RAG quality

- handling edge cases

👉 It’s not perfect

But it’s getting better.

🏁 Final Thought

Don’t ask:

“Can AI generate SQL?”

Ask:

“Can I trust this in production?”

That’s the real challenge.