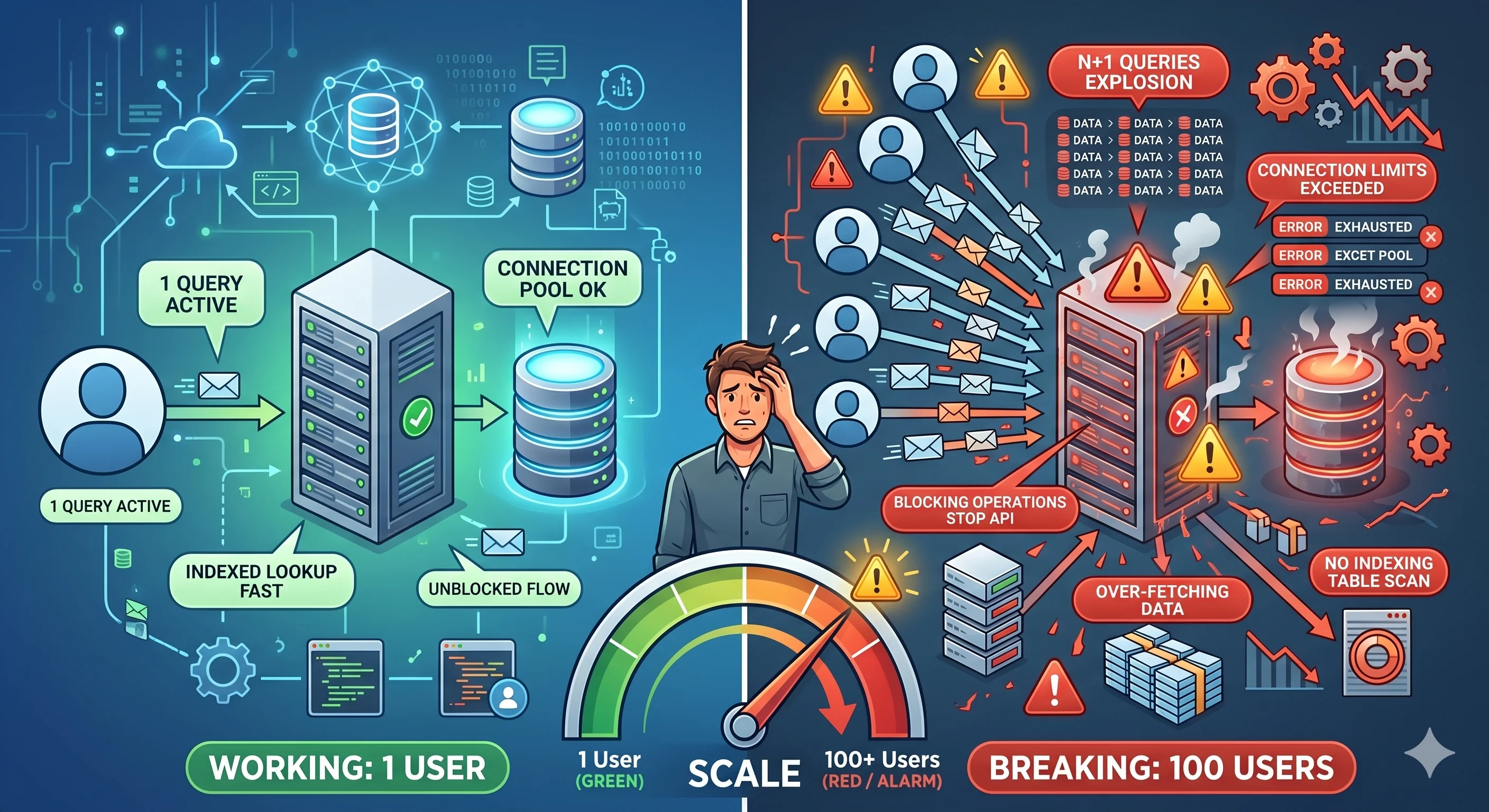

Why Your System Breaks at 100 Users (Not 1)

Why Your System Breaks at 100 Users (Not 1)

Everything works perfectly.

With 1 user.

Then you get 100 users…

And suddenly:

- APIs slow down

- Database spikes

- Errors appear

Nothing changed in your code.

So what broke?

👉 Your assumptions.

The Illusion

Most developers test like this:

- Local machine

- Single request

- Small dataset

Everything looks fast.

But production is different:

- Many users

- Concurrent requests

- Shared resources

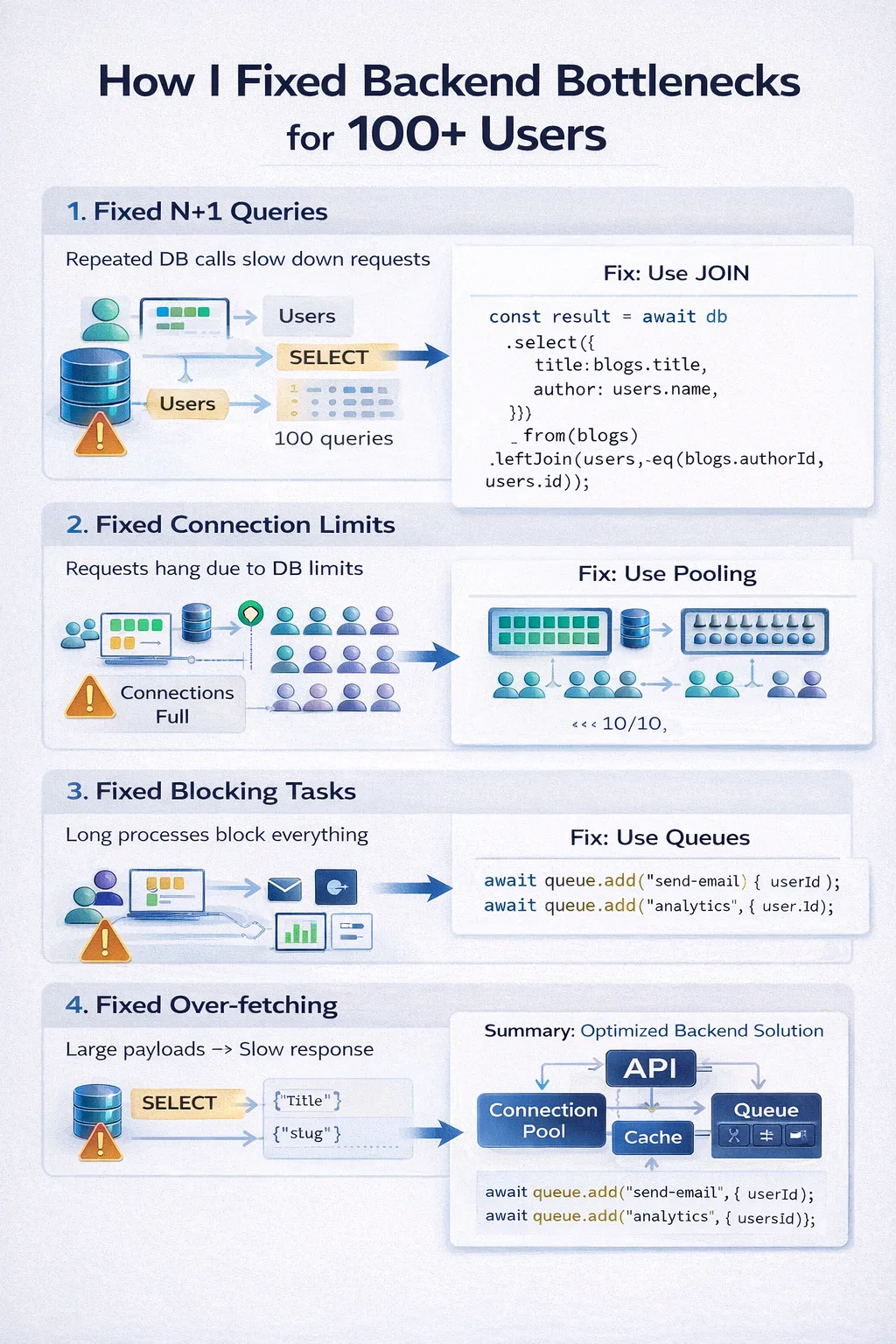

Problem 1: N+1 Queries (Silent Killer)

What You Write

const blogs = await db.select().from(blogs);

for (const blog of blogs) {

const author = await db

.select()

.from(users)

.where(eq(users.id, blog.authorId));

}

What Happens

- 1 query to fetch blogs

- N queries for authors

👉 Total = N + 1 queries

If N = 100 → 101 queries per request

Fix: Use JOIN

const result = await db

.select({

title: blogs.title,

author: users.name,

})

.from(blogs)

.leftJoin(users, eq(blogs.authorId, users.id));

👉 1 query instead of 101

Problem 2: Database Connection Limits

Each request uses a DB connection.

Example:

- 100 users

- Each request = 1 connection

👉 You hit DB limits quickly

What Happens

- New requests wait

- Queries slow down

- System appears "down"

Fix: Connection Pooling

Use pooled connections instead of opening new ones.

const client = postgres(connectionString, {

max: 10,

});

👉 Reuse connections instead of creating new ones

Problem 3: Blocking Operations

Bad pattern:

await createOrder();

await sendEmail();

await updateAnalytics();

👉 Everything blocks the request

Fix: Background Jobs

await createOrder();

await queue.add("send-email", { userId });

await queue.add("analytics", { orderId });

👉 Fast response + async processing

Problem 4: Over-fetching Data

Bad:

const blogs = await db.select().from(blogs);

👉 Fetches everything

Fix: Select Only Needed Fields

const blogs = await db

.select({

title: blogs.title,

slug: blogs.slug,

})

.from(blogs);

👉 Smaller payload → faster response

Problem 5: No Indexing

Without index:

SELECT * FROM blogs WHERE slug = 'post';

👉 Full table scan

Fix: Add Index

CREATE INDEX idx_slug ON blogs(slug);

👉 Fast lookup

Summary Table

| Problem | Impact | Fix |

|---|---|---|

| N+1 Queries | Slow DB | JOIN |

| Connection Limits | Timeouts | Pooling |

| Blocking Tasks | Slow APIs | Queues |

| Over-fetching | Large payloads | Select fields |

| No Indexing | Slow queries | Index |

Key Insight

Your system doesn't break because of traffic.

It breaks because of inefficiencies multiplied by traffic.

Final Takeaway

1 user hides problems.

100 users expose them.

Closing Thought

Before scaling servers, ask:

👉 "What happens when 100 requests hit this at the same time?"

If the answer is unclear…

👉 your system will break.